The Short Version

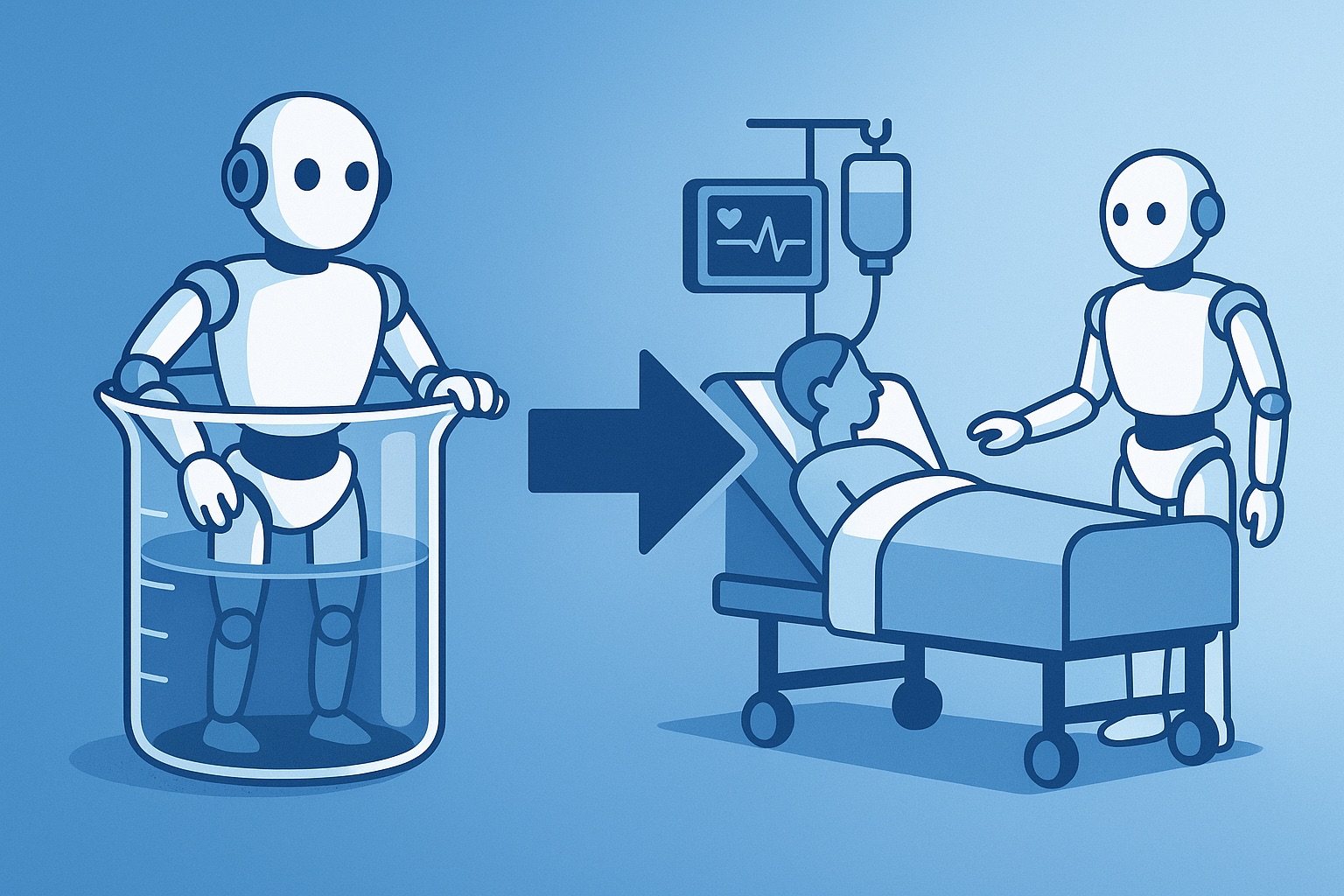

- Multiple health systems are now running autonomous AI agents in production clinical workflows, moving past the pilot phase

- The successful deployments share a common pattern: strong data foundations, clear governance, and human override paths designed before deployment

- The gap between "AI that advises" and "AI that acts" is closing, and most organizations are not operationally ready for the shift

What Happened

Three developments this week signal that agentic AI in healthcare has crossed from proof-of-concept to production:

Medication reconciliation at discharge. A major academic medical center reported that its AI agent now handles initial medication reconciliation for 60% of discharges. The agent pulls the patient's medication list from the EHR, cross-references it with pharmacy fill data, flags discrepancies, and generates a draft reconciliation for pharmacist review. Time to complete reconciliation dropped from 22 minutes to 7 minutes per patient.

Autonomous prior authorization. Two regional health systems deployed AI agents that handle prior authorization end-to-end for a defined set of procedures. The agent reviews clinical documentation, maps it to payer requirements, submits the authorization, and tracks the response. Human review triggers only when the agent's confidence score falls below threshold or the payer requests additional documentation.

Patient scheduling. A multi-site primary care network reported that its AI scheduling agent now handles 40% of inbound scheduling calls without human handoff. The agent verifies identity, checks insurance, matches patient needs to provider availability, and books the appointment.

None of these are chatbots with fancy branding. They are autonomous systems completing multi-step workflows within defined guardrails.

What It Likely Means

The pattern here is important. These are not bleeding-edge research institutions deploying experimental technology. They are operationally mature organizations that invested in data infrastructure, workflow mapping, and governance frameworks before they deployed agents.

That sequence matters. The organizations that tried to deploy agents first and figure out governance later are the ones generating the cautionary headlines about hallucinated codes and misrouted patients.

Here is how I think about the maturity curve:

Stage 1: AI as suggestion engine. The model recommends. A human decides. This is where most organizations are today. Think ambient scribes, coding assistants, and clinical decision support alerts.

Stage 2: AI as delegate. The model acts within pre-approved parameters. A human reviews exceptions. This is where the leading deployments are moving. Think autonomous scheduling, medication reconciliation, and prior auth.

Stage 3: AI as operator. The model manages end-to-end processes with minimal human oversight. Humans set policies and review aggregate outcomes. Nobody in healthcare is here yet, and for good reason.

The jump from Stage 1 to Stage 2 is where most organizations get stuck. Not because the technology is not ready, but because the organizational trust, governance, and liability frameworks are not.

What the Market Might Be Missing

The deployment bottleneck is not technical. Every major cloud provider and health IT vendor has agentic AI tools ready to deploy. The constraint is organizational readiness: data quality, workflow documentation, governance policies, and clinical staff buy-in.

Early movers are building compound advantages. Every week an agent runs in production, it generates data that improves its performance, surfaces edge cases that strengthen governance, and builds organizational muscle for managing autonomous systems. Organizations that wait for "the technology to mature" are falling behind organizations that are maturing with the technology.

The liability landscape is shifting faster than expected. Malpractice insurers are beginning to differentiate between organizations that deploy AI with documented governance frameworks and those that deploy it ad hoc. Within 18 months, expect your malpractice premiums to reflect your AI governance maturity.

The Bottom Line

- Start with the workflow, not the technology. Before you deploy an agent, map the workflow it will handle end-to-end. Every decision point, every data dependency, every exception path. If you cannot draw the workflow on a whiteboard, the agent cannot execute it reliably.

- Build the governance layer first. Define what the agent is allowed to do, what triggers human review, how decisions are logged, and how you will detect drift. This is not bureaucracy. This is the operating system for autonomous AI. Without it, you are deploying a powerful tool with no safety rails.

- Measure outcomes, not activity. The metric is not "number of prior auths processed by AI." It is "denial rate for AI-processed prior auths vs. human-processed." If the agent processes more volume but generates more denials, you have automated a problem, not solved one.